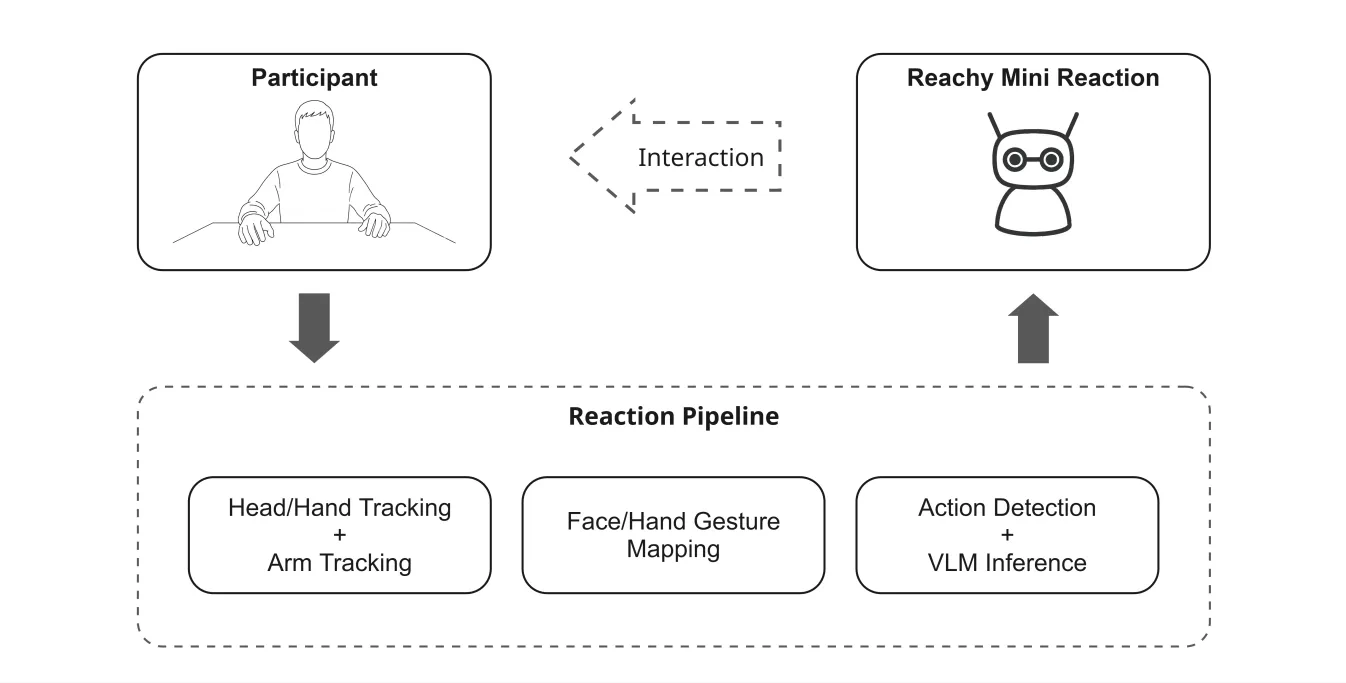

Non-verbal cues such as gesture, posture, and facial expression are central to human communication, yet remain largely unaddressed in social robotics. Existing HRI systems either rely on language or require cloud inference, limiting real-time, expressive non-verbal response. We present a fully offline, real-time non-verbal interaction system for Reachy Mini, a compact humanoid robot, that perceives and autonomously responds to human body motion and emotion.

Our system combines continuous head and arm mirroring, hand gesture recognition, facial emotion detection, and a locally-hosted Vision Language Model (VLM) that selects from a library of 81 pre-recorded expressions, coordinated by a priority scheduler across four concurrent perception layers.

Running entirely on consumer hardware with 3s end-to-end latency, the system produces behaviour convincing enough that participants in a Turing test-style study could not reliably distinguish it from human teleoperation (38% overall accuracy, below the 50% chance baseline). These results suggest that lightweight, offline multimodal perception is sufficient to produce socially credible non-verbal robot behaviour, opening a path toward accessible and deployable social HRI systems.

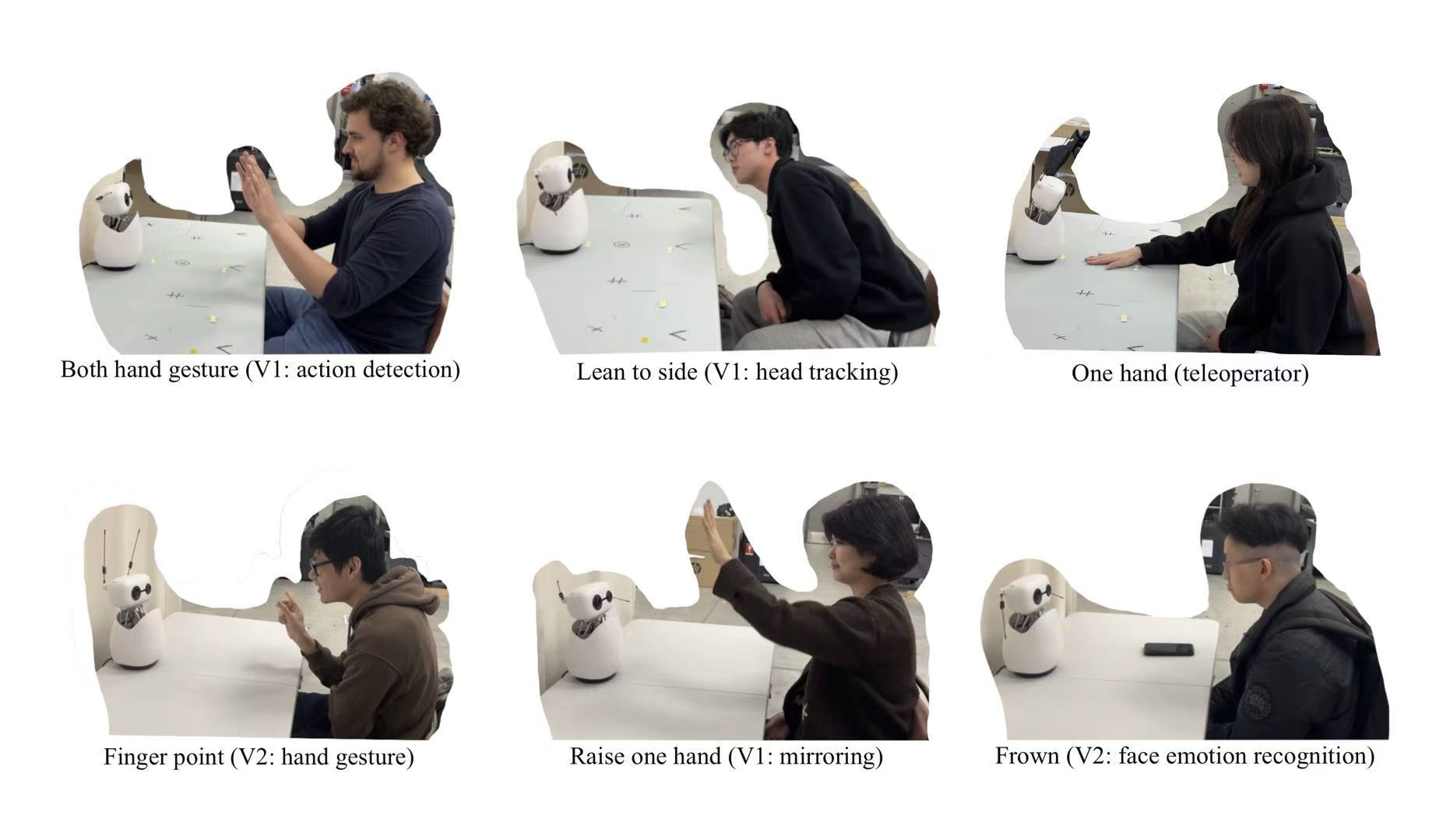

Top row, left to right: a participant performing a two-handed gesture triggering action detection (V2); leaning to the side with head tracking active (V2); a teleoperator controlling the robot with one hand (V2). Bottom row: a participant using a finger-point gesture for hand gesture recognition (V3); raising one hand while the mirroring layer tracks arm elevation (V2); exhibiting a frown expression detected by the facial emotion recognition module (V3).

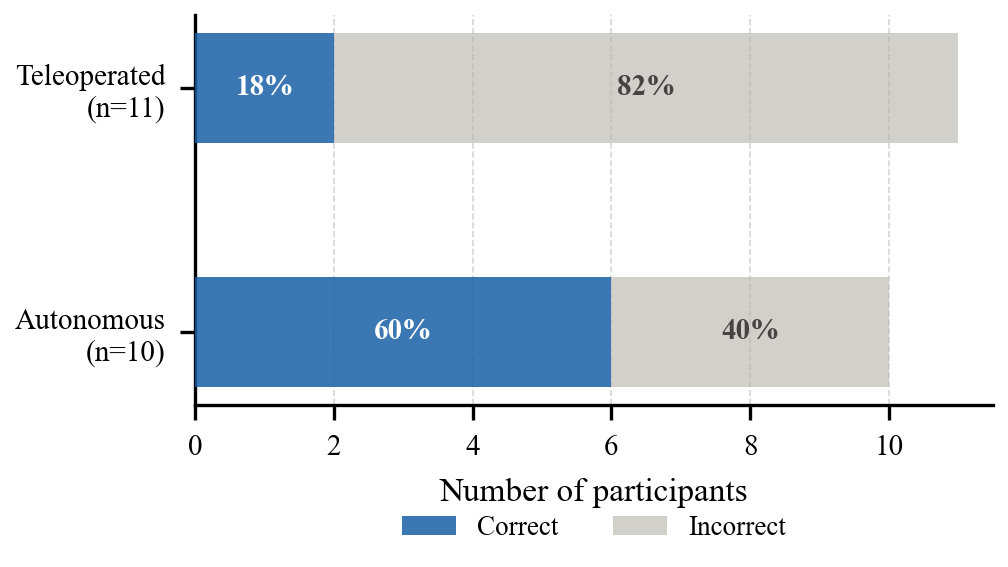

| Pipeline | Condition | Correct | Total | Accuracy |

|---|---|---|---|---|

| V2 | Autonomous | 4 | 5 | 80% |

| V2 | Teleoperated | 1 | 4 | 25% |

| V3 | Autonomous | 2 | 5 | 40% |

| V3 | Teleoperated | 1 | 7 | 14% |

| V2 + V3 | Autonomous | 6 | 10 | 60% |

| V2 + V3 | Teleoperated | 2 | 11 | 18% |

Participants in the Teleoperated condition identified the correct control mode at only 18% (2/11), well below the 50% chance baseline, indicating a strong autonomy-assumption bias. Participants in the Autonomous condition performed closer to chance at 60% (6/10).

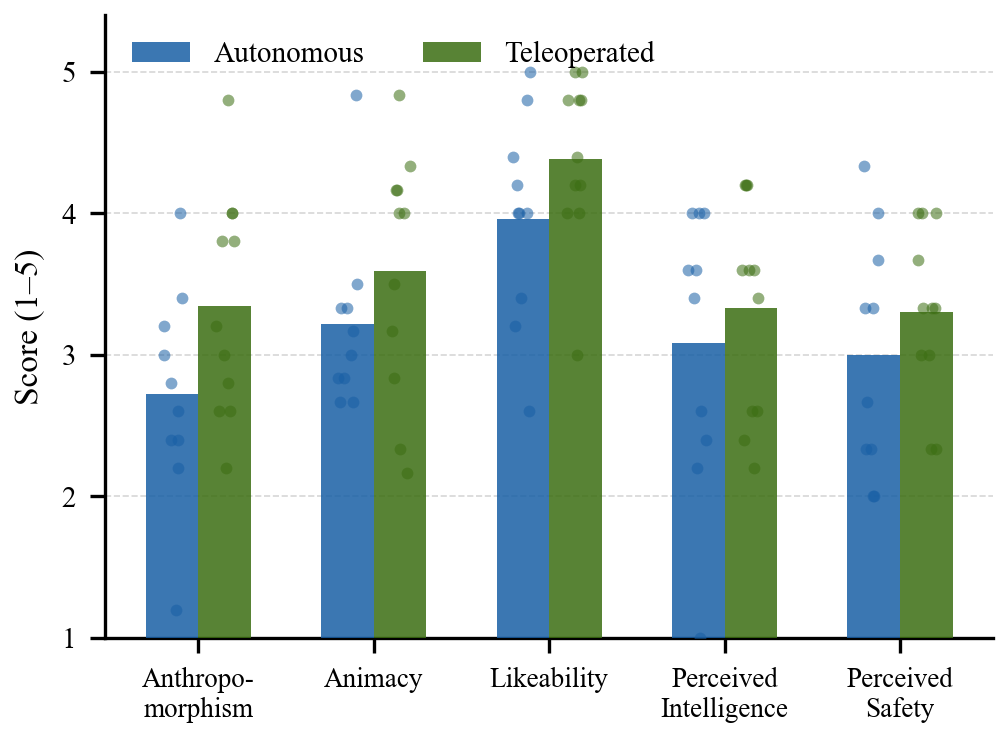

| Condition | Anthropomorphism | Animacy | Likeability | Intelligence | Safety |

|---|---|---|---|---|---|

| Autonomous | 2.72 | 3.22 | 3.96 | 3.08 | 3.00 |

| Teleoperated | 3.35 | 3.59 | 4.38 | 3.33 | 3.30 |

Bars show condition means; overlaid points show individual observations (N=21). Teleoperated interactions received higher scores across all subscales except Perceived Safety, though differences should be interpreted as descriptive trends given the pilot study sample size.